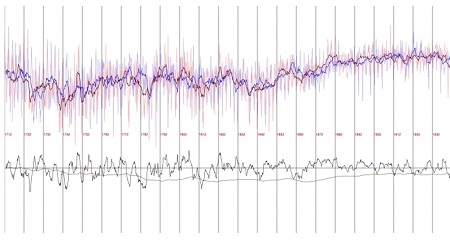

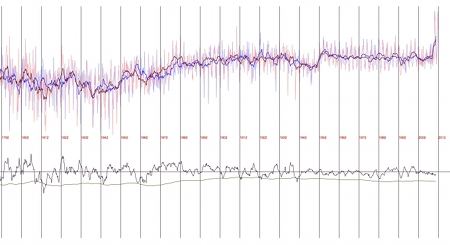

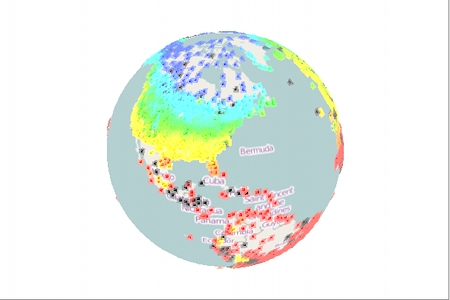

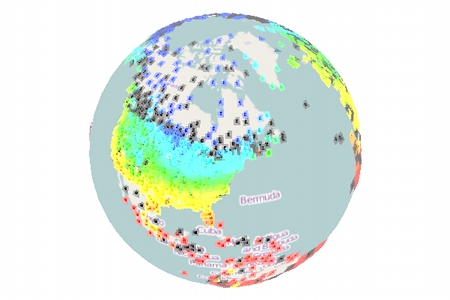

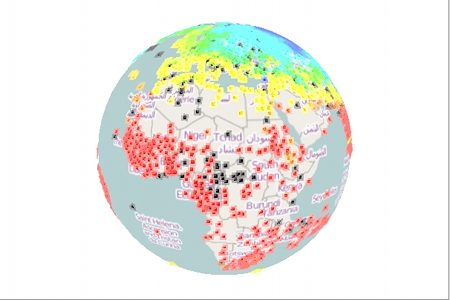

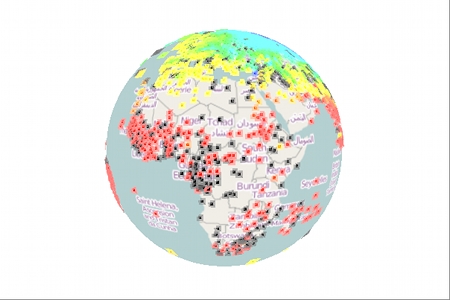

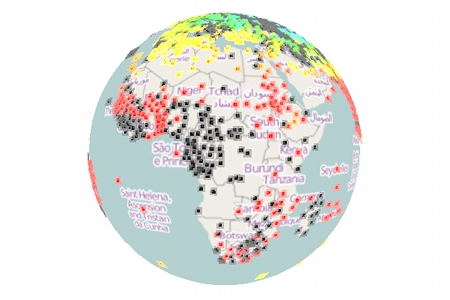

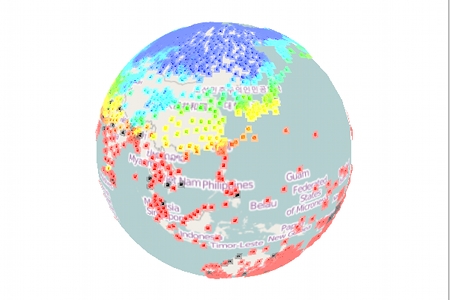

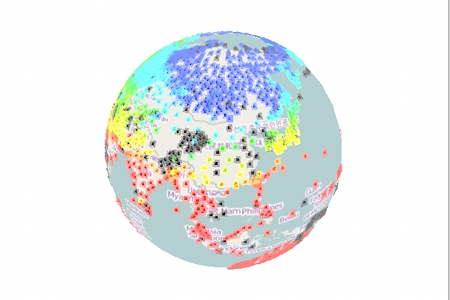

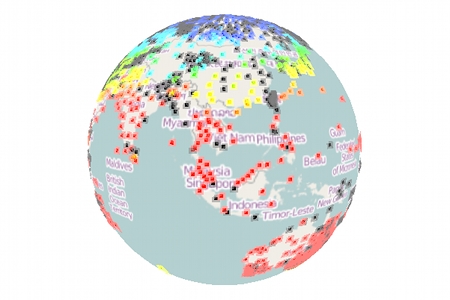

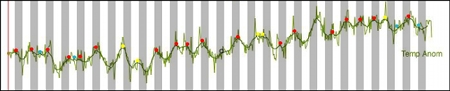

This concerns a discussion on Azimuth. I found that the temperature anomaly curve, which describes the global combined land [CRUTEM4] and marine [sea surface temperature (SST)] temperature anomalies (an anomaly is a deviation from a mean temperature) over time (HADCRUT4-GL) has a two-year periodicity (for more details click here). The dots in the above image shall display, why I think so. The dark line drawn over the jagged anomaly curve is the mean curve. The grey strips are one year in width. A dot highlights a peak (or at least an upward bump) in the mean curve. More precisely there are:

18 red dots which describe peaks within grey 2-year interval

5 yellow dots which describe peaks out of grey 2-year interval

(two yellow peaks are rather close together)

1 uncolored dot which describes no real peak, but just a bump

4 blue dots which describe small peaks within ditches

One sees that the red and yellow dots describe more or less all peaks in the curve (the blue dots care about the minor peaks, and there is just one bump, which is not a full peak). The fact that the majority of the red and yellow dots is red, means that there is a peak every 2 years, with a certain unpreciseness which is indicated by the width of the interval.

Upon writing this post I saw that I forgot one red dot. Can you spot where?

Especially after doing this visualization this periodicity appears to me meanwhile so visible that I think this should be a widely known phenomenom, however at Azimuth nobody has heard yet about it. If its not a bug then I could imagine that it could at least partially be due to differences in the solar irradiance for northern and southern hemissphere, but this is sofar just a wild guess and would need further investigations, which would cost me a lot of (unpaid) time and brain. So if you know how this phenomen is called then please drop a line. If its not a bug then this phenomen appears to me as an important fact which may amongst others enter the explanation for El Niño.