uncanny paintings

“empathic paintings” by Shugrina, Betke and Collomosse

You probably know these filters in e.g. photoshop or gimp, with which you can make a fotograph of last sunday’s family vacation look like Seurat’s sunday afternoon. (let’s say almost :-)) These flters are using socalled “painterly rendering algorithms”, i.e. mathematical algorithms which deform your image into the desired way. They belong to a class of “nonphotorealistic rendering techniques” (NPR’s), which have been of certain interest also to the movie industry. Imagine that your interface is now not a boring fotoshop or gimp navigation bar, but that you can choose the algorithm via your own facial expression. Then you have an “empathic painting” in the sense of Shugrina, Betke and Collomosse.

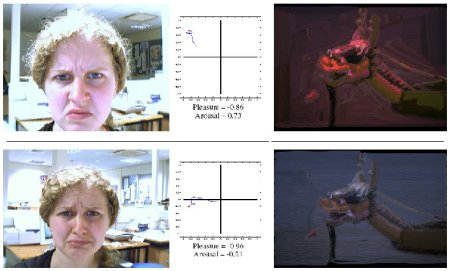

For obtaining their device they use 8 facial expressions (read in via a webcam) classified by means of the facial action coding system FACS, map these onto a 2D parameter space (which is called Russels 2D pleasure-arousal space) and use this 2D space for a certain painterly rendering algorithm (see above image). The device works in real time.

Using gestures or facial expressions as an interface is not something new and they write themselves that their work is in the spirit of e.g. the work of Hertzman and Perlin. However in principle I think there is a lot of research necessary until facial expressions and gestures may be really used for high sensible interfaces. There are similar problems like for the case of voice recognition (see e.g. previous post).

Remark: From the images on their website and in the paper it seems that e.g. anger gives a more red coloured image, fear blue etc. I am asking myself how much of these rendering desicions are culturally motivated.

January 29th, 2007 at 12:41 am

[…] So it is no wonder that people try to find laws, for e.g. when a (still) face looks attracting to others and when not. Facial expressions (see above image) play a significant role (see also this old randform post). But also cultural things etc. are important. But still – if we assume to have eliminated all these factors as best as possible (by e.g. comparing bold black and white faces of the same age group looking emotionless) – then is there still a link between the appearance of a face and the interpretation of the human character behind the face? How stable is this interpretation, like e.g. when the face was distorted by violence or an accident? How much does the physical distortion parallel the psychological? […]